The Buffet is Closing: The End of the Infinite Internet

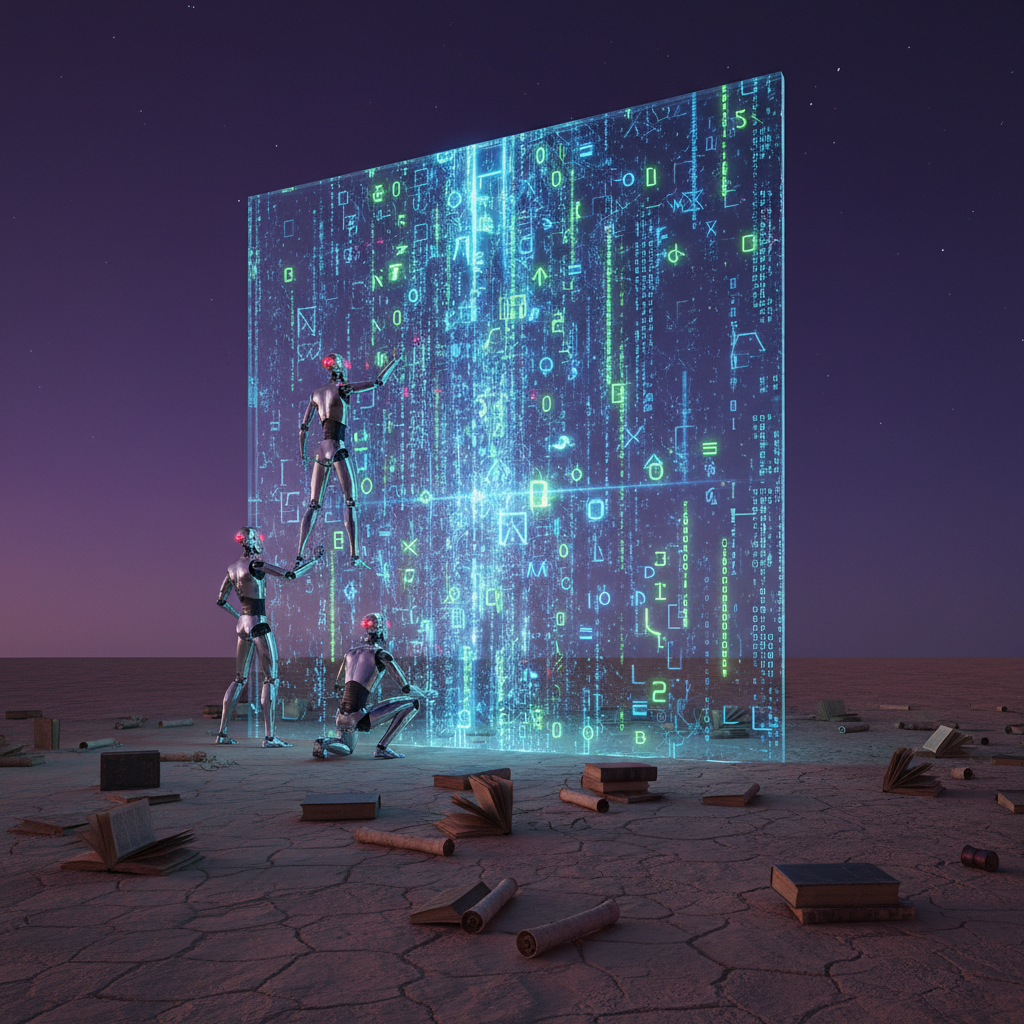

For the last decade, AI developers have treated the internet like an all-you-can-eat buffet. From the sprawling archives of Wikipedia to the chaotic threads of Reddit and the niche forums of the early 2000s, engineers at OpenAI, Google, and Meta have scraped it all. This “more is better” philosophy—often called the Scaling Laws—suggested that if you just threw more data and more compute at a transformer model, it would inevitably get smarter. It worked remarkably well for GPT-3 and GPT-4, but the industry has hit a massive, invisible barrier: The Great Data Wall.

Recent research from groups like Epoch AI suggests that tech companies may exhaust the supply of high-quality public human-generated text by 2026 or 2028. We are running out of internet. The easy gains from “vacuuming up the web” are gone. The remaining data is either low-quality (spam and SEO farm content), private (emails and medical records), or increasingly, generated by other AI models. This creates a dangerous feedback loop where AI begins eating its own tail.

To survive, the industry is pivoting. The era of pure “brute force” scaling is being replaced by a sophisticated focus on reasoning, logic, and curated synthetic data. We aren’t just building bigger brains anymore; we are teaching those brains how to think with less.

Scaling Laws vs. The Reality of Diminishing Returns

In 2020, research popularized the idea that model performance is a predictable function of three variables: the number of parameters, the amount of compute power, and the volume of training data. For years, the graph moved in a beautiful, upward diagonal. If you doubled the data, the model’s error rate dropped consistently. However, as we move into the era of 100-trillion-token datasets, that curve is flattening.

The problem is quality. A model learns more from 1,000 pages of a peer-reviewed physics journal than it does from 100,000 pages of YouTube comments. By now, the “gold standard” datasets—Common Crawl, Books3, and GitHub—have been ingested multiple times. Using the same data for a newer model doesn’t necessarily make it smarter; it just makes it more likely to memorize the training set rather than generalize new knowledge. This is the plateau that keeps AI researchers awake at night.

The ‘Enshittification’ of Training Data

The internet is currently being flooded with AI-generated content. From “slop” on Facebook to auto-generated product reviews on Amazon, the signal-to-noise ratio is plummeting. If OpenAI trains GPT-5 on data produced by GPT-4, the newer model inherits the quirks, hallucinations, and biases of its predecessor. This is known as “Model Collapse.” Within just a few generations of AI-on-AI training, the models can become nonsensical, losing the creative “long tail” of human thought that makes them useful in the first place.

The Pivot to ‘Reasoning’ and System 2 Thinking

Since more data isn’t the answer, OpenAI and its competitors are looking at how models use the data they already have. This is the core of the “Strawberry” project (now known as the o1 series). Instead of just predicting the next word in a sentence—which is essentially a very fast intuition—these models are being trained to pause and use “chain-of-thought” processing.

Psychologists call this System 1 and System 2 thinking. System 1 is fast, instinctive, and emotional (your brain immediately knowing 2+2=4). System 2 is slower, more deliberative, and logical (your brain solving 14 x 27). Traditional LLMs are almost entirely System 1. The move toward “reasoning” models is an attempt to install a System 2 circuit.

Test-Time Compute: The New Frontier

If you can’t increase the training data, you can increase the amount of “thinking time” during the inference phase. When you ask a reasoning model a hard question, it might take 15 seconds to answer. During those 15 seconds, it identifies its own mistakes, tries different paths, and double-checks its logic before presenting the final result. This “test-time compute” allows a smaller model to outperform a massive one by simply working harder on the specific problem at hand.

- Verification over Generation: Models are being trained to act as “verifiers,” checking the work of other models to ensure logical consistency.

- Synthetic Data Generation: Instead of scraping the messy web, companies are using models to create “perfect” data—logical puzzles, math proofs, and clean code—to train the next generation.

- Reinforcement Learning (RL): Using human feedback or automated “correctness” markers to reward the model for good reasoning steps, not just the correct final answer.

The Paywall Paradox: Data is the New Oil (Seriously)

As the “free” internet disappears, the cost of high-quality human data is skyrocketing. Reddit, formerly a goldmine for conversational data, now charges millions for API access. The New York Times is suing OpenAI, claiming their copyrighted stories are being used without permission. News Corp recently signed a $250 million deal with OpenAI to provide content.

This creates a massive barrier to entry. In the early days, a few guys in a garage could scrape the web and build a decent model. Today, the “Big Three” (Google, Microsoft/OpenAI, and Meta) are the only ones with the deep pockets required to buy the rights to the world’s libraries. We are seeing a shift from an open-source data culture to a walled-garden approach where data is licensed like intellectual property.

Beyond Text: Video and Sensory Data

If text is running out, where do we go next? The answer lies in pixels. Meta and Google are sitting on a treasure trove of video data through Instagram Reels and YouTube. Video provides something text cannot: an understanding of physical space, cause and effect, and gravity. A model that watches a million hours of people cooking or building furniture understands the world differently than one that just reads recipes. However, training on video is computationally expensive—orders of magnitude more difficult than processing text strings.

The Silicon Valley Search for ‘Perfect’ Records

We are seeing a move toward “Deep Data” rather than “Big Data.” Companies are now hiring thousands of PhDs and subject matter experts—chemists, lawyers, and senior software engineers—to write “gold” responses. This high-touch approach creates a curriculum for the AI. If the internet is a chaotic public square, these curated datasets are a private university.

OpenAI’s use of RLHF (Reinforcement Learning from Human Feedback) was the first step, but the new phase involves “Self-Explanatory AI.” The model doesn’t just give you the answer; it explains the logic. This explanation then becomes new training data. By forcing the AI to articulate its reasoning, engineers can find where the logic breaks and patch it, effectively refining the model’s “mental model” of the world.

The Human Edge in a Post-Data World

Does the end of the “infinite internet” mean AI will stop improving? Probably not. It just means the era of easy architectural gains is over. We are likely to see a “Cambrian Explosion” of specialized models. Instead of one giant model that knows everything about everything, we will have models that are specifically trained on specialized, high-integrity data for medicine, law, and engineering.

For humans, this shift is actually a vote of confidence. The fact that AI companies are running out of data proves that human creativity and unique perspectives are limited and precious resources. Pure mimicry of the web can only go so far. To get to the next level—Level 5 AGI—the machines need to learn how to wonder, check their own work, and perhaps eventually, create brand-new knowledge rather than just remixing what we’ve already said.

The Great Data Wall isn’t a dead end; it’s a pivot point. The focus has moved from the quantity of the “what” to the quality of the “how.” As AI models begin to spend more time “thinking” and less time “reciting,” we may find that the best way to train an intelligent system isn’t to give it more information, but to teach it how to use the information it already has. The next leap in artificial intelligence won’t come from a bigger crawler or a faster scraper—it will come from the quiet logic of a model that knows how to think before it speaks.

Frequently asked questions

What happens if AI trains only on synthetic data?

A synthetic data collapse occurs if AI models are trained on too much AI-generated content, leading to errors, loss of nuance, and ‘model collapse’ where the output becomes gibberish.

Why can’t AI companies just keep scraping the web?

Social media companies like Reddit and X (formerly Twitter) are now charging millions for API access, and news organizations are filing lawsuits or signing exclusive licensing deals to protect their archives.

What is the difference between scaling and reasoning?

Model scaling focuses on the size of the dataset and parameters, while reasoning focuses on ‘test-time compute’—giving the model more time to think through a problem logically before answering.

What is the ‘Strawberry’ approach in AI training?

OpenAI’s Strawberry (o1) uses reinforcement learning to reward the model for correct chains of thought, allowing it to solve complex math and coding problems that standard GPT-4 couldn’t handle.